For our computers at home we have a small network based on a standard wireless dual radio N/G router with 4x 1Gb Ethernet ports. However wireless performance in my office which is about 30 feet away from the router is poor (maybe 2Mb/s). We fixed this by creating a Ethernet over Power Line network (using the Netgear XAVB101) which works fine for most applications.

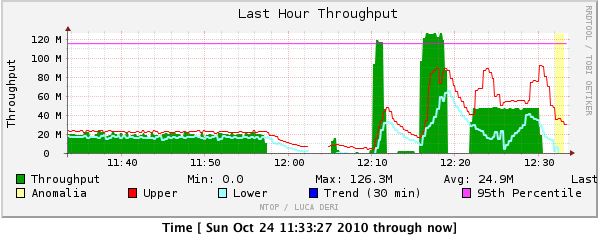

The one area that didn’t work well was transferring large files from and to our Network Attaches Storage (a 1 TB Buffalo LS-WTGL, predecessor to this model). Transfer speed from my MacBook Pro maxed out around 20 Mb/s. In other words filling the 1TB drive would take about 4.5 days. That’s a bit slow. How to fix it after the break.

Continue reading “For NAS on the Home Network, it turns out GbE matters”

The project that currently takes up the majority of my time at Stanford is

The project that currently takes up the majority of my time at Stanford is